It’s not surprising to see social media sites like Facebook flooded with a jumble of disparate opinions in the aftermath of a national news event, and the 2015 shooting in San Bernardino was no exception.

One advertisement on the social networking site, published the day after the tragedy, encouraged readers to “stop playing with fire and send all the Muslim invaders out of the country.” It was accompanied by an image of an archetypal red and blue-clad American cowboy, brandishing a lasso on horseback as if to round up the “invaders” himself.

Another ad, from a group called “United Muslims of America” had a very different message: “Stop Islamophobia.” A second ad from the same group proclaimed, “I’m Muslim and I’m proud.”

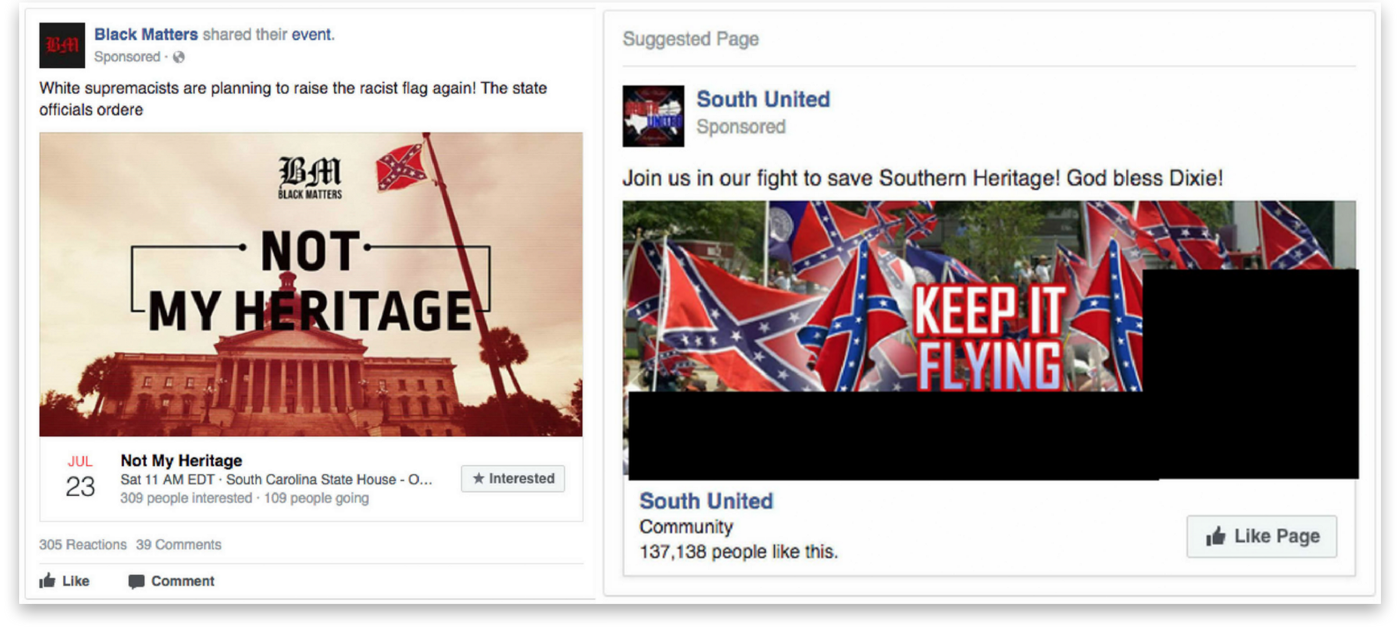

Perhaps the content of these ads seems normal in our polarized social media age, but there is something strange about these specific posts. All three were paid for by the same people.

Between 2015 and 2017, a Saint Petersburg-based organization called the Internet Research Agency (IRA) spent about $100,000 on more than 3,000 Facebook advertisements. Their goal, according to House Intelligence Committee ranking member Rep. Adam Schiff (D-Calif.) was to “sow discord in the U.S. by inflaming passions on a range of divisive issues.”

All told, the ads were seen more than 40 million times.

The IRA, working at the direction of the Kremlin, posted its ads under dozens of pseudonyms modeled after social movements in the United States and used Facebook’s profiling capabilities to precisely target them at demographic groups.

It was hardly a challenge to find ads playing into both sides of any controversial issue. In the database of ads posted by the House Intelligence Committee, immigrants are described as “hardworking, educated, and proud,” while also “flooding America with drugs.” Police are sometimes “dirty and racist” and other times “brave and smart.”

We wanted to visualize this strategy of appealing to both sides and allow people to explore the language used by the Russians to stoke national tensions in the months leading up to and immediately following the 2016 presidential election.

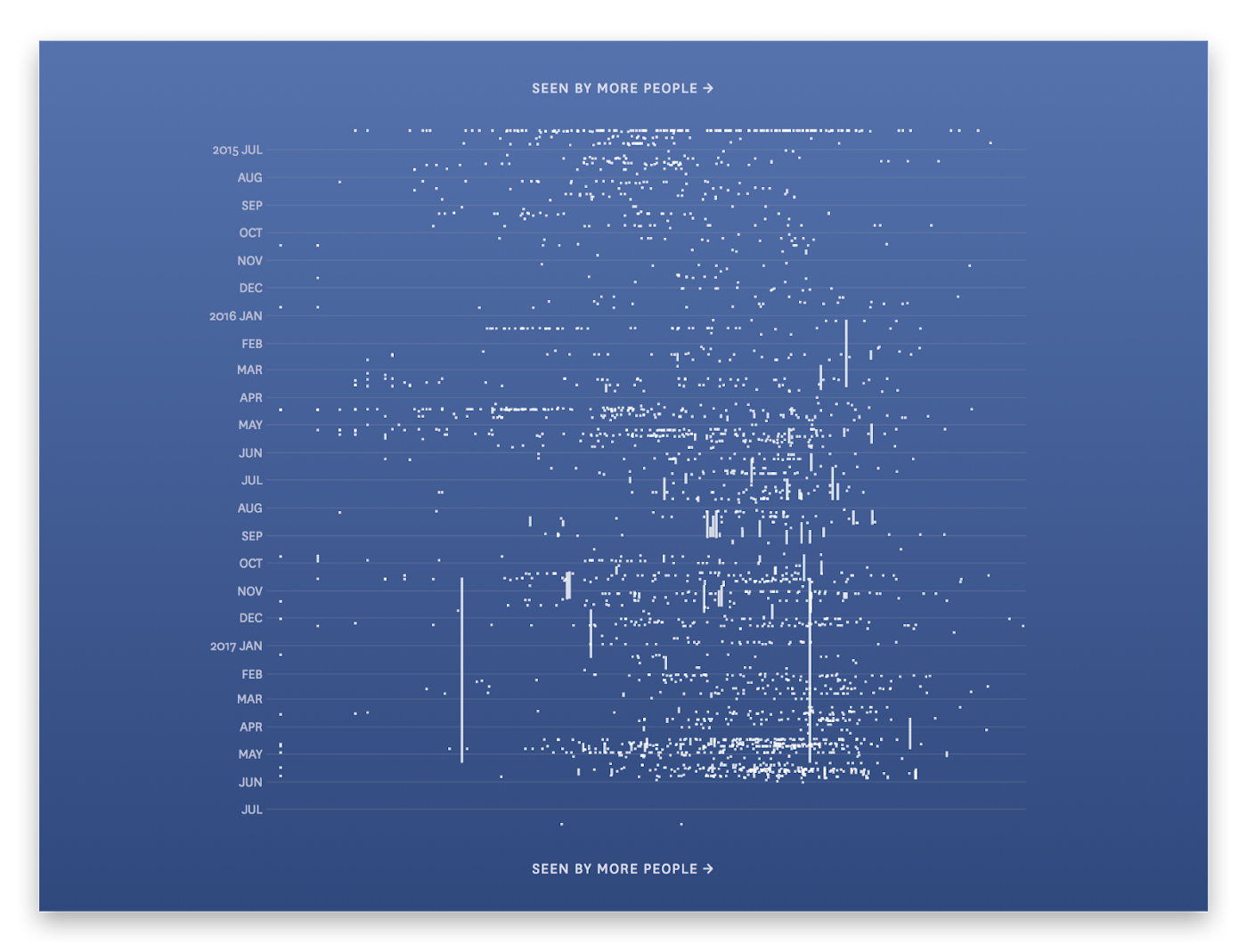

We started by showing all of the ads on an interactive chart that plotted the date they were posted against the number of people that saw them. With the addition of some searching and filtering tools this gave a decent overview of the topics covered by the ads, but required a lot of work by the reader to get to any specific insights.

This abstract view, with ads represented by lines and dots, had another problem: it took us a step away from the actual content of the ads, which we thought was the most important and interesting part. This could have been a visualization of any data set that’s based on a timeline, and we want to make sure that the representation was unique to the data it portrayed.

The language used in the ads was fascinating in its mix of the banal and the hyper-inflammatory. Ads about Texas barbecue were posted alongside ones encouraging the state to secede. Pointless memes were juxtaposed with pointed racism.

Thus, we decided to make the text of the ads the primary focus of the tool.

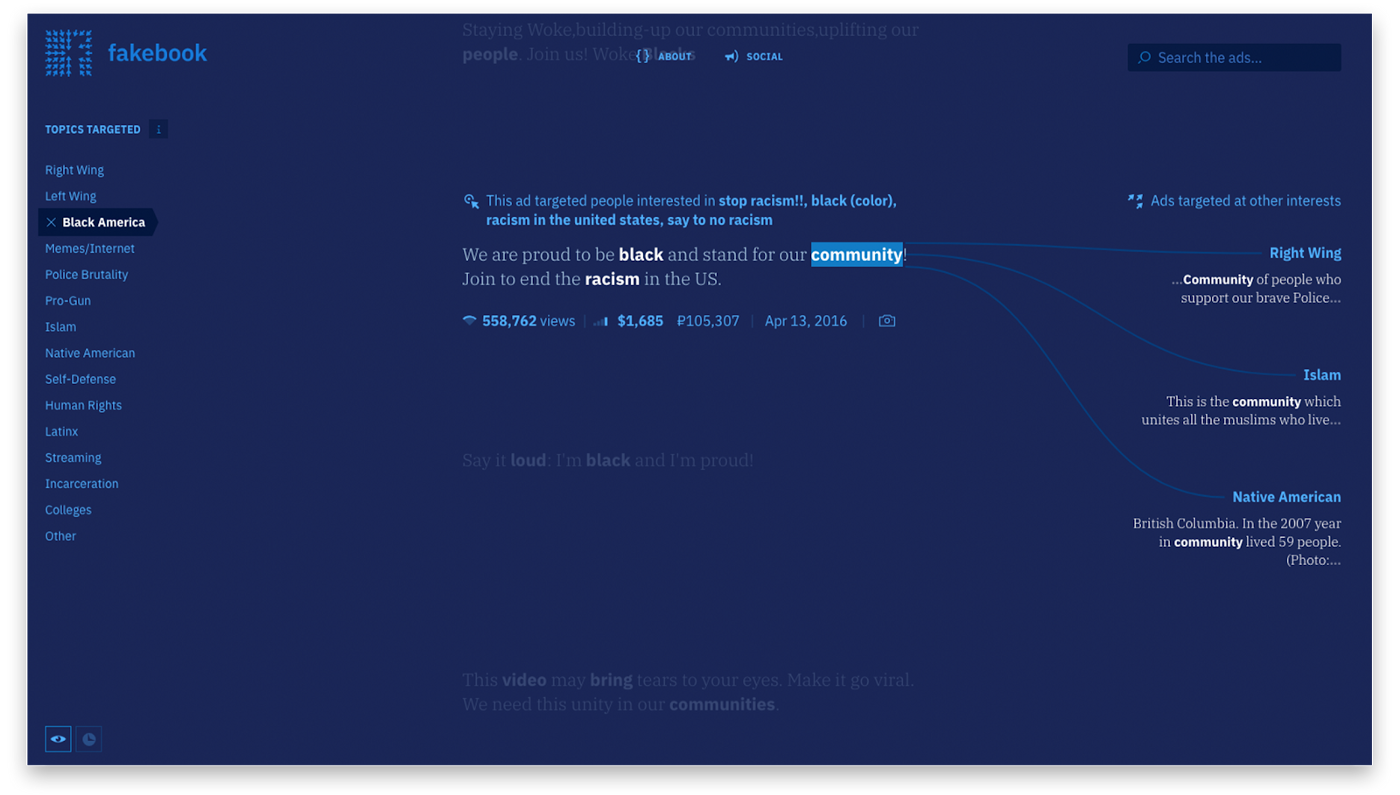

We wanted to create an experience that allowed readers to both engage directly with the advertisements and see how the same topics were discussed in ads targeted at different demographics. To get at the latter point, we highlighted key terms in each ad and showed how those words were used in different contexts.

For example, in ads targeted at people interested in right-wing issues, the word “gun” was usually used in the context of advocating for second amendment rights. Meanwhile, in ads targeted at people interested in black history, “gun” was usually used in discussing police violence.

Visit the tool online: fathom.info/fakebook

There was, however, one opinion all the ads held in common: Hillary Clinton should not be president. People interested in Black Lives Matter were told that “Hillary Clinton doesn’t deserve black votes.” In ads addressing people in Texas, she was referred to as “Killary Rotten Clinton.” People who had “liked” Bernie Sanders on Facebook saw ads suggesting they “disavow support for the Clinton political dynasty.”

There’s no denying it when you read through these ads: the Russians wanted Trump. (The U.S. Intelligence Community Visit the tool online: called it a “clear preference.”)

Knowing that makes it easy to see how these ads could be so deviously effective. Because they play into nearly every conceivable political and social opinion, it’s easy to find something you agree with.

So even if you didn’t hate Clinton, it’s easy to see how attacks on her from groups you otherwise agree with could have contributed towards a sense of apathy about the election in general. And even if it didn’t change your vote, being inundated with a stream of posts targeted directly at your interests, with the truth sometimes stretched to fit, must leave some impression.

Was the Russian government successful in its goal (according to U.S. intelligence agencies) of “undermining public faith in the U.S. democratic process?”

It’s hard to know for sure, though it’s certainly troubling that an ad bearing the Confederate flag and announcing that “the South will rise again” was viewed more than half a million times the month before the 2016 election.

Hopefully, by exploring these Facebook ads, readers can get a sense of the strategies and language used by the Russians and be better prepared to recognize them in the future. Because even though these ads only ran through mid-2017, these kinds of influence campaigns are far from over.

U.S. intelligence officials say the Russians “would have seen their election influence campaign as at least a qualified success,” and according to the U.S. director of national intelligence this sort of cyber trickery is still happening today.

The best thing to do is stay informed and aware of these efforts, and we hope our tool will help.

We’d love to hear what you’re working on, what you’re intrigued by, and what messy data problems we can help you solve. Find us on the web, drop us a line at hello@fathom.info, or subscribe to our newsletter.

We’d love to hear what you’re working on, what you’re curious about, and what messy data problems we can help you solve. Drop us a line at hello@fathom.info, or you can subscribe to our newsletter for updates.